Have a peek at how they vary in this intriguing article.

Out of being dismissed as science fiction into becoming an essential part of multiple, exceptionally popular film collection, notably the one starring Arnold Schwarzenegger, artificial intelligence has become part of our life for more than we perceive. In reality, the Turing Test, frequently used for benchmarking the’intellect’ in artificial intelligence, is an intriguing process where AI must persuade an individual, through a dialog, that it isn’t a robot. There are a range of different tests designed to confirm how developed artificial intelligence is, such as Goertzel’s Coffee Test and Nilsson’s Employment Test which evaluate a robot’s functionality in various human activities.

For a field, AI has likely seen the many ups and downs over the last 50 decades. On the 1 hand it’s hailed as the frontier of the upcoming technological revolution, although alternatively, it’s seen with fear because it’s thought to have the capability to transcend human intellect and thus achieve world domination! But most scientists agree that we’re at the nascent stages of growing AI that’s capable of these feats, and study continues unfettered from the anxieties.

Applications of AI

Back in the early days, the aim of investigators was to assemble complex machines capable of displaying some semblance of individual intellect, a theory we now term general intellect’. While it’s been a popular notion in films and in science fiction, we’re a long way from creating it for real.

Specialised software of AI, however, permit us to utilize image classification and facial recognition in addition to smart personal assistants like Siri and Alexa. These generally leverage multiple calculations to offer this functionality to the end consumer, but might broadly be categorized as AI. The term was initially utilized to refer to the procedure for utilizing algorithms to encode information, build models which may learn from it, and finally make predictions using these parameters that were learned. It surrounded various approaches such as decision trees, clustering, regression, and Bayesian approaches which didn’t really attain the ultimate aim of general intellect’.

While it started as a little portion of AI, burgeoning curiosity has propelled ML into the forefront of study and it’s currently used across domains. Growing hardware support in addition to advancements in algorithms, notably pattern recognition, has resulted in ML is available for a far larger audience, resulting in wider adoption.

Programs of ML

Originally, the key applications of ML were confined to the area of computer vision and pattern recognition. This was before the leading success and precision it enjoys now. Back then, ML looked a fairly tame field, using its range restricted to professors and education.

Nowadays we utilize ML without even being conscious of how reliant we are on it to get our everyday pursuits. By Google’s research team hoping to substitute the PageRank algorithm using a better ML algorithm called RankBrain, to Facebook mechanically suggesting friends to label in an image, we’re surrounded using cases for ML algorithms.

An integral ML strategy that stayed dormant for a couple of decades has been artificial neural networks. This finally gained broad acceptance when enhanced processing capabilities became available. A neural system simulates the action of a brain’s nerves in a layered manner, and also the propagation of information happens in a similar fashion, allowing machines to find out more about a given set of observations and make precise forecasts.

These neural networks which had until recently been dismissed, save for a couple researchers headed by Geoffrey Hinton, have now demonstrated an exceptional possibility of managing massive volumes of information and improving the technical applications of machine learning. The truth of the models allows reliable solutions to be provided to end users because the false positives are eliminated almost completely.

Also read: Best 10 Semrush Alternative For 2025 (Free & Paid)Programs of DL

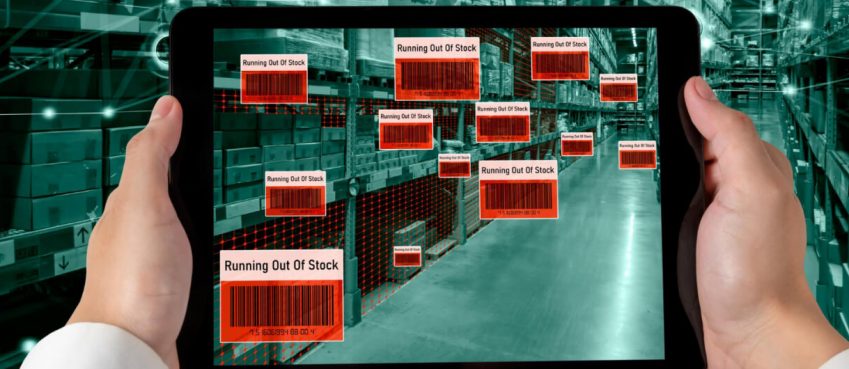

DL has big scale business software due to its capability to learn from countless observations simultaneously. Though computationally intensive, it’s still the preferred choice due to its unparalleled precision. This encompasses several picture recognition software that relied upon computer vision clinics before deep learning development. Autonomous vehicles and recommendation approaches (like the ones used by Netflix and Amazon) are one of the most well-known programs of Deep learning algorithms. It’s a wide definition which covers use cases which vary from a game-playing bot into your voice recognition system inside Siri, in addition to converting text into speech and vice versa. It’s conventionally believed to have three classes:

Super-intelligent AI, that suggests a stage where AI exceeds human intelligence entirely It involves learning from information so as to make informed decisions in a later stage and empowers AI to be applied to a wide spectrum of issues. ML permits systems to create their own conclusions after a learning procedure which trains the machine towards a target. Numerous tools have emerged which allow larger audience access to the energy of ML algorithms, such as Python libraries like scikit-learn, frameworks like MLib such as Apache Spark, applications like RapidMiner, etc.

An additional sub-division and subset of AI will be DL, which harnesses the energy of profound neural networks so as to train models on big data collections and make precise predictions from the fields of picture, voice and face recognition, amongst others. The very low trade-off between coaching time and computation mistakes makes it a rewarding solution for many companies to change their core practices into Deep learning workstation or incorporate these algorithms in their system.

Classifying software: There are quite fuzzy boundaries which differentiate the development app of AI, ML and DL. But as there’s a demarcation of this extent, it’s possible to determine which subset that a particular program belongs to. Usually, we categorize private assistants and other sorts of robots which assist with specialised activities, like playing games, as AI because of their wider nature. Included in these are the software of lookup capabilities, filtering and short-listing, voice recognition and text-to-speech transformation bundled into a broker.

Practices that fall to a narrower class like those between Big Data analytics and data mining, pattern recognition and so on, are put beneath the spectrum of ML algorithms. Normally, these demand systems that’learn’ from information and use that learning into a specialised job.

Ultimately, software belonging to a market category, which encircles a large corpus of text or image-based data utilized to train a model on graphics processing units (GPUs) demand using DL algorithms. These generally consist of specialised video and image recognition activities applied to some wider use, for example, autonomous navigation and driving.

Top 10 News

-

01

Top 10 Deep Learning Multimodal Models & Their Uses

Tuesday August 12, 2025

-

02

10 Google AI Mode Facts That Every SEOs Should Know (And Wha...

Friday July 4, 2025

-

03

Top 10 visionOS 26 Features & Announcement (With Video)

Thursday June 12, 2025

-

04

Top 10 Veo 3 AI Video Generators in 2025 (Compared & Te...

Tuesday June 10, 2025

-

05

Top 10 AI GPUs That Can Increase Work Productivity By 30% (W...

Wednesday May 28, 2025

-

06

[10 BEST] AI Influencer Generator Apps Trending Right Now

Monday March 17, 2025

-

07

The 10 Best Companies Providing Electric Fencing For Busines...

Tuesday March 11, 2025

-

08

Top 10 Social Security Fairness Act Benefits In 2025

Wednesday March 5, 2025

-

09

Top 10 AI Infrastructure Companies In The World

Tuesday February 11, 2025

-

10

What Are Top 10 Blood Thinners To Minimize Heart Disease?

Wednesday January 22, 2025